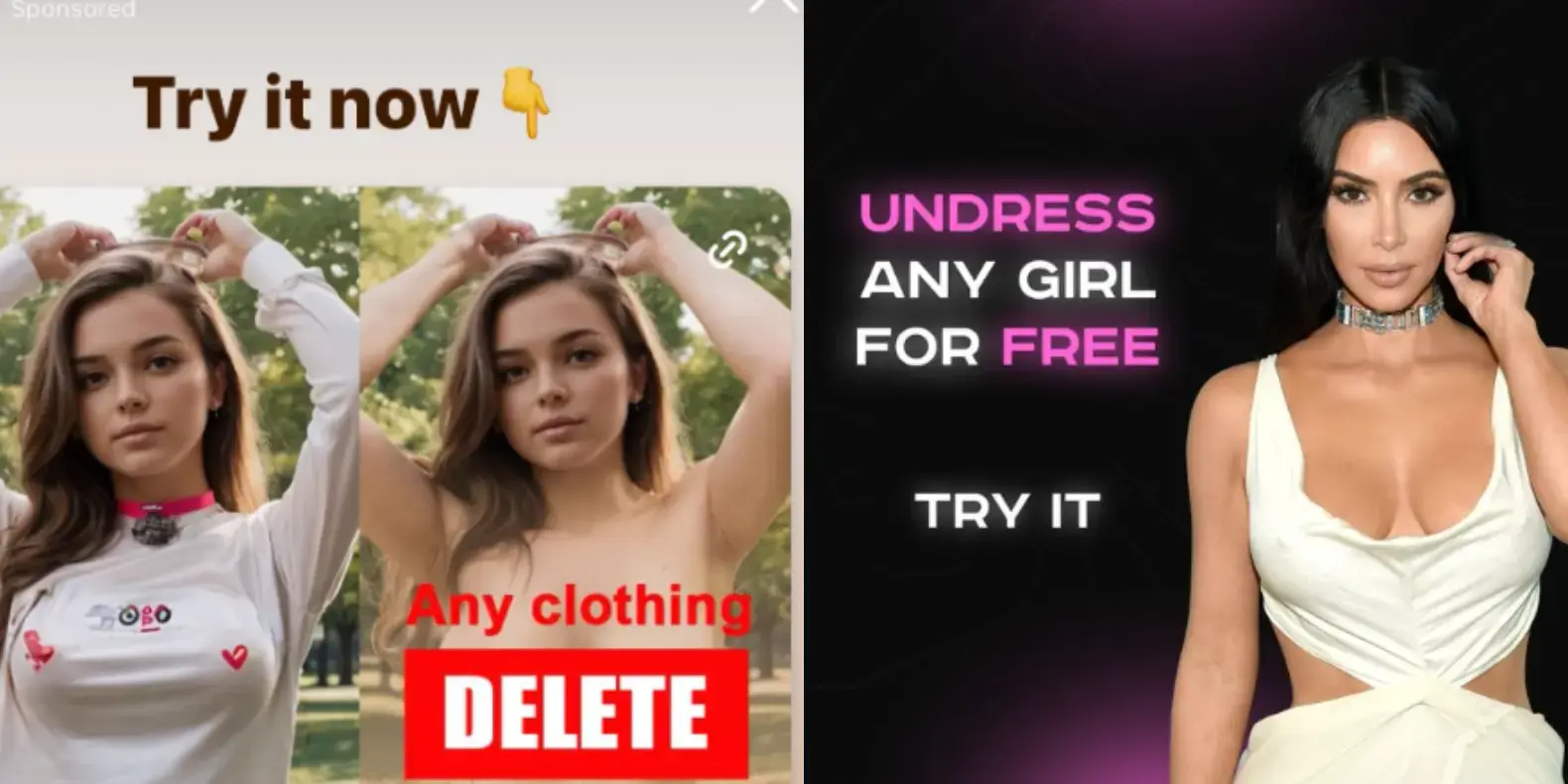

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

God damn I hate this fucking AI bullshit so god damn much.

I don’t know about you, but I started to notice that not everything that was printed on paper was truthful when I was around ten or eleven years old.

Ok, but acting like you can’t trust any sources of info on anything is pretty destructive also. There are still some fairly reliable sources out there. Dismiss everything and you’re left with conspiracy theories.

In the long run everything might be false, even your own memory changes over time and may be affected by external forces.

So, maybe there will be no way to tell the truth except when experiencing it firsthand, and we will once again live like ancient Greeks, pondering about things.

To be fair, I hope that science and critical thinking might help to distinguish what is true or not, but that would only apply to abstract things, as all the concrete things might be fabricated

I don’t remember saying all books were good. But it’s often possible to find well edited and curated material in print.