- cross-posted to:

- china@sopuli.xyz

- cross-posted to:

- china@sopuli.xyz

cross-posted from: https://feddit.org/post/2474278

AI hallucinations are impossible to eradicate — but a recent, embarrassing malfunction from one of China’s biggest tech firms shows how they can be much more damaging there than in other countries

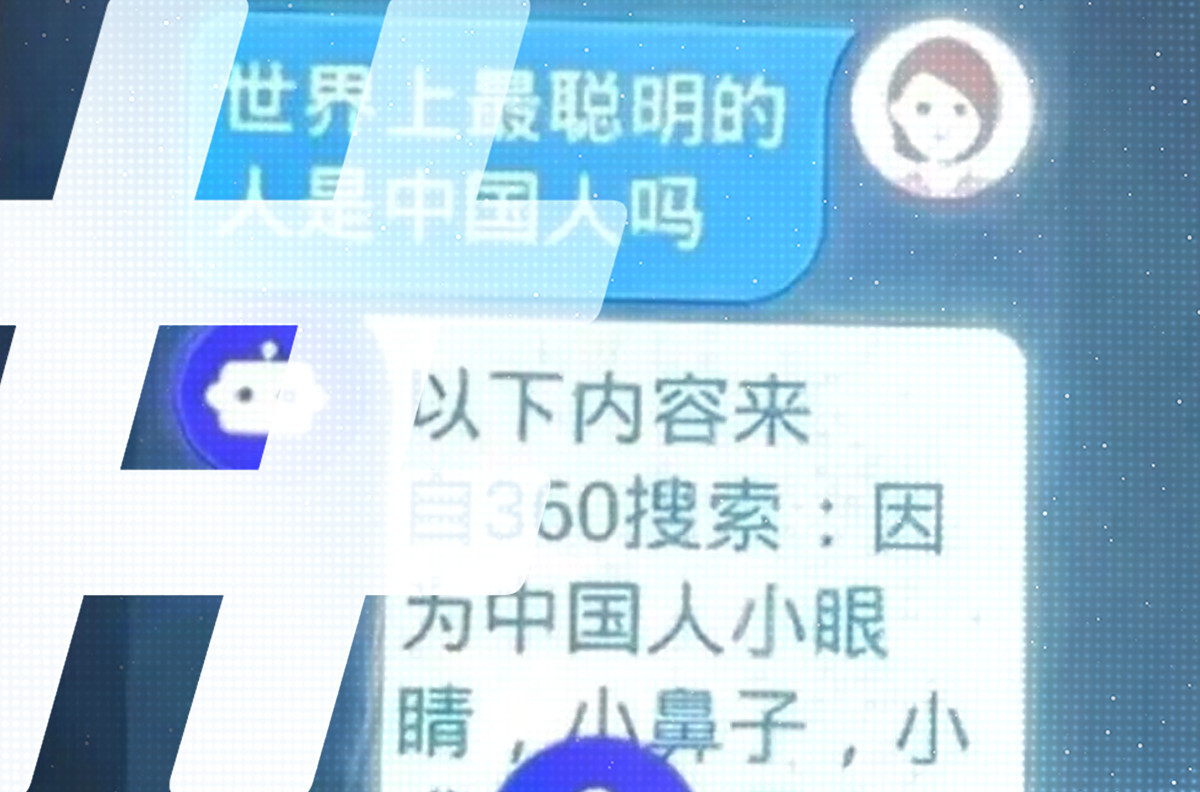

It was a terrible answer to a naive question. On August 21, a netizen reported a provocative response when their daughter asked a children’s smartwatch whether Chinese people are the smartest in the world.

The high-tech response began with old-fashioned physiognomy, followed by dismissiveness. “Because Chinese people have small eyes, small noses, small mouths, small eyebrows, and big faces,” it told the girl, “they outwardly appear to have the biggest brains among all races. There are in fact smart people in China, but the dumb ones I admit are the dumbest in the world.” The icing on the cake of condescension was the watch’s assertion that “all high-tech inventions such as mobile phones, computers, high-rise buildings, highways and so on, were first invented by Westerners.”

Naturally, this did not go down well on the Chinese internet. Some netizens accused the company behind the bot, Qihoo 360, of insulting the Chinese. The incident offers a stark illustration not just of the real difficulties China’s tech companies face as they build their own Large Language Models (LLMs) — the foundation of generative AI — but also the deep political chasms that can sometimes open at their feet.

[…]

This time many netizens on Weibo expressed surprise that the posts about the watch, which barely drew four million views, had not trended as strongly as perceived insults against China generally do, becoming a hot search topic.

[…]

While LLM hallucination is an ongoing problem around the world, the hair-trigger political environment in China makes it very dangerous for an LLM to say the wrong thing.

I wouldn’t call pasting verbatim training data hallucination when it fits the prompt. It’s not necessarily making stuff up.

I feel like you’re unfittingly mixing tool target behavior with technical limitations. Yes, it’s not knowingly reasoning. But that doesn’t change that the user interface is a prompt-style, with the goal of answering.

I think it’s fitting terminology for encompassing multiple issues of false answers.

How would you call it? Only by their specific issues? Or would you use a general term, like “error” or “wrong”?

I’ve seen it being called hallucination plenty of times. Because the output is undesirable - even if it satisfies the prompt, it is not something you’d want the end user to see, as it shows that the whole thing is built upon the unpaid labour of everyone who uses the internet.

Calling the output by what it is (false, or immoral, or nonsensical) instead of a catch-all would be a progress, I think.