deleted by creator

But, that said, when I messed around with AI image generators pretty much any kind of prompt that included woman or female designations tended towards sexualized versions, even to the point of violating its own content policy.

Tried it on the copilot app and one result had an asian but wasnt sexual but indeed very sexy in style.

Prompt: Generate me a picture of a female wizard reading a massive book of spells

Pictures:

Edit:

Female wizard: Kinda magical fantasy. Has good intentions

Witch: Spooky and mysterious. Halloween themes

Sorceress: Same as wizard but with my selfish/bad intentions.What is sexy in style here? They are wearing loose, long-sleeved robes up to the neck. Makeup and hair are just following current trends.

*attractive

My bad.

My experience has been that they have a tendency to make overly attractive men too. Getting it to generate anyone average nevermind ugly or with deformities (eg scars) is really hard.

It bothers me that they all look like they’re in their teens or 20s, when a male wizard would inevitbly be shown as anywhere from middle aged to Gandalf.

I bet it just always makes women young in every context.

Anyway most of them look like they’re from an old 3D Japanese RPG or CG anime. Round face with pointy chin, plastic-y smooth skin.

I’ll note that anime and Asian RPG characters often have a light skin tone (another can of worms there) that can cause foreign viewers to perceive them as white even while Japanese viewers perceive them as asian. Animation and similarly stylized art involves a level of abstraction and cultural interpretation that might not be there (at least not in exactly the same way) if we were talking about race (or gender, or whatever else) with regards to more realistic art.

Edit: this also reminds me of Disney’s notorious “same face, same profile” problem with female characters in their 3D animated films. Male characters can be any of a wild variety of shapes, but a Disney princess essentially round faced with huge eyes and slim. Even just looking at different slim, round-ish faced male characters, I think you’ll find more variety in their portrayals within that group than amongst the Disney princess group.

It’s a problem with the “no uglies” negative prompt, and to which images “ugly” was applied by humans tagging the training dataset.

If the taggers think that so much as a single wrinkle on a woman is “ugly”, but a man has to be missing half his teeth and have a crooked face to start looking “ugly”… well, this is what we get.

Pretty people get photographed/painted more, resulting in much of the training data being pretty people, thus pretty people get generated more frequently.

Part of that is just smoothness and symmetry which we consider to be attractive attributes but is also a consequence of the averaging that the algorithm is doing (which is why AI images all look various sorts of “melty”).

They can be considered petty, fcking whres /s

That’s DALL-E. DALL-E is different than Stable Diffusion, which is different from Midjourney, which is different from the many NAI anime models out there.

We need to stop treating LD models like they are all the same thing. Models are based on the data they are trained on. Sure, a lot of them started out from a Stable Diffusion model, but that’s not always the case, and enough training can have them go off in specialized directions.

Either I am blind or comment OP doesnt mention SD nor any other specific model.

The pictures in your embedded widget on your post say “Unterstützt von DALL-E 3”. Also, the very start of the article says “When Melissa Heikkilä tried Lensa’s Magic Avatars”, which uses Stable Diffusion, but I’m not sure if they further trained it themselves.

The point is that “Lensa’s Magic Avatars” isn’t all of AI, and clickbait titles like this needs to stop treating it like that. It’s the latent diffusion equivalent of this.

deleted by creator

Cute wizard girl w

wasn’t sexual but indeed very sexy in style.

Those characters have child-like facial proportions. 🧐

Take a look at 25 year old asian girls.

They all look like or close to that…

Yeah, if you go back through hundreds of years of artwork, most of it are pictures of women. Some of them are nude. There are many many artists that only draw women, modern or classical. And there’s a ton of male Japanese artists from centuries ago that did the same thing.

I asked it to create a sort of witchy, sorceress character and many of the generations she was fully topless with her boobs out, despite me not asking that or even explicitly putting “fully clothed” into the prompt. There was one image that the system created and then removed and threatened me with a ban for it being too sexualized despite me putting no sexual language in the prompt and it being all the AI.

That’s just one model, and obviously not Stable Diffusion. LD models are just based on whatever they were trained on. If you don’t like it, download another model trained on something else and try it out. Or train one yourself.

Also, I wish everybody would download a SD client and just use this software locally. All of these toy websites are shit, and local clients aren’t going to threaten to ban you because of what you generated. It’s a good learning experience to figure out the software, and these tools are useful for more things than just bitching about the tech on the web.

Because we have been pornifying asian women on the internet for decades. Does that really beg the question posed in the title?

You’re absolutely correct, yet ask someone who’s very pro AI and they might dismiss such claims as “needing better prompts”. Also many people may not be as tech informed as you are, and bringing light to algorithmic bias can help them understand and navigate the world we now live in. Dismissing the article just because you already know the answer doesn’t really encourage people to participate in a discussion.

deleted by creator

If you genuinely don’t know: because it’s an attention-grabbing title (which isn’t inherently bad)

It’s really hard getting dark skin sometimes. A lot of the time it’s not even just the model, LoRAs and Textual Inversions make the skin lighter again so you have to try even harder. It’s going to take conscious effort from people to tune models that are inclusive. With the way media is biased right now, I feel like it’s going to take a lot of effort.

“Inclusive models” would need to be larger.

Right now people seem to prefer smaller quantized models, with whatever set of even smaller LoRAs on top, that make them output what they want… and only include more generic elements in the base model.

“Inclusive models” would need to be larger.

[citation needed]

To my understanding the problem is that the models reproduce biases in the training material, not model size. Alignment is currently a manual process after the initial unsupervised learning phase, often done by click-workers (Reinforcement Learning from Human Feedback, RLHF), and aimed at coaxing the model towards more “politically correct” outputs; But ultimately at that time the damage is already done since the bias is encoded in the model weights and will resurface in the outputs just randomly or if you “jailbreak” enough.

In the context of the OP, if your training material has a high volume of sexualised depictions of Asian women the model will reproduce that in its outputs. Which is also the argument the article makes. So what you need for more inclusive models is essentially a de-biased training set designed with that specific purpose in mind.

I’m glad to be corrected here, especially if you have any sources to look at.

You can cite me on this:

First, there is no thing as a “de-biased” training set, only sets with whatever target series of biases you define for them to reflect.

Then, there are only two ways to change the biases of a training set:

- either you replace data until your desired objective, which will reduce the model’s quality for any of the alternatives

- or you add data until your desired objective, which will require an increased size to encode the increased amount of data, or the model’s quality will go down for all cases (you’d be diluting every other case)

For reference, LoRAs are a sledgehammer approach to apply the first way.

As for the article, it’s talking about the output of some app, with unknown extra prompting and LoRAs getting applied in the back, so it’s worthless as a discussion of the underlying model, much less as a discussion of all models.

First, there is no thing as a “de-biased” training set, only sets with whatever target series of biases you define for them to reflect.

Yes, I obviously meant “de-biased” by definition of whoever makes the set. Didn’t think it worth mentioning, as it seems self evident. But again, in concrete terms regarding the OP this just means not having your dataset skewed towards sexualised depictions of certain groups.

- either you replace data until your desired objective, which will reduce the model’s quality for any of the alternatives

[…]

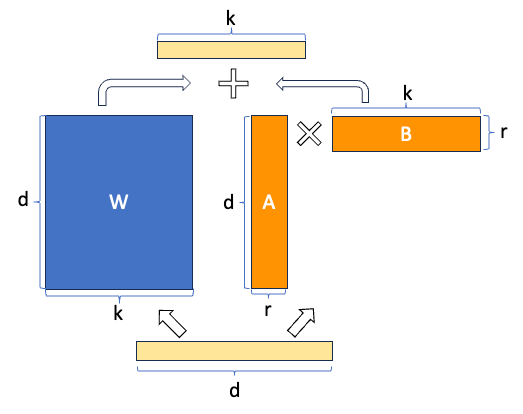

For reference, LoRAs are a sledgehammer approach to apply the first way.The paper introducing LoRA seems to disagree (emphasis mine):

We propose Low-Rank Adaptation, or LoRA, which freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture, greatly reducing the number of trainable parameters for downstream tasks.

There is no data replaced, the model is not changed at all. In fact if I’m not misunderstanding it adds an additional neural network on top of the pre-trained one, i.e. it’s adding data instead of replacing any. Fighting bias with bias if you will.

And I think this is relevant to a discussion of all models, as reproduction of training set biases is something common to all neural networks.

That paper is correct (emphasis mine):

We propose Low-Rank Adaptation, or LoRA, which freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture, greatly reducing the number of trainable parameters for downstream tasks.

You can see how it works in the “Introduction” section, particularly figure 1, or in this nice writeup:

https://dataman-ai.medium.com/fine-tune-a-gpt-lora-e9b72ad4ad3

LoRA is a “space and time efficient” technique to produce a modification matrix for each layer. It doesn’t introduce new layers, or add data to any layer. To the contrary, it’s bludgeoning all the separate values in each layer, and modifying each whole column and whole row by the same delta (or only a few deltas, in any case with Ar«Wk and Br«Wd).

Turns out… that’s enough to apply some broad strokes type of changes to a model, which still limps along thanks to the remaining value variation. But don’t be mistaken: with each additional LoRA applied, a model loses some of its finer details, until at some point it descends into total nonsense.

I wouldn’t mind. I’m here for it.

Are you ready to run a 100B FP64 parameter model? Or even a 10B FP32 one?

Over time, I wouldn’t be surprised if 500B INT8 models became commonplace with neuromorphic RAM, but there’s still some time for that to happen.

You don’t need that many parameters, 4gb checkpoints work just fine.

For more inclusive models, or for current ones? In order to add something, either the size has to grow, or something would need to get pushed out (content, or quality). 4GB models are already at the limit of usefulness, both DALLE3 and SDXL run at about 12B parameters, so to make them “more inclusive” they’d have to grow.

And every single Asian game and anime tends to go for skimpy or virtual softcore with it’s female characters. Rarely you see a female character in full armor.

Wrong question. The right question would be:

Why is AI as used in Lensa’s Magic Avatars App Pornifying Asian Women?

Ask Lensa to remove the “ugly” and similar negative prompts from their avatar generating App, and let’s see what comes out.

https://stable-diffusion-art.com/how-to-use-negative-prompts/#Universal_negative_prompt

For reference, check out how that same negative prompt turns a chubby-ish poorly shaved average guy, into a male pornstar, or a valet into a rich daddy’s boy.

Can we please collectively get into the habit of editing these borderline-clickbait titles or at least add sub-titles explaining the real article? This isn’t reddit where you can’t edit anything and can’t add explanatory text!

Removed by mod

This is incredibly dismissive of the concerns raised and adds nothing to the discussion

If I had to guess, they probably did a shit job labeling training data or used pre labeled images, now where in the world could they have found huge amounts of pictures of women on the internet with the specific label of “Asian”?

Almost like, most of what determines the quality of the output is not “prompt engineering” but actually the back end work of labeling the training data properly, and you’re not actually saving much labor over more traditional methods, just making the labor more anonymous, easier to hide, and thus easier to exploit and devalue.

Almost like this shit is a massive farce just like the “meta verse” and crypto that will fail to be market viable and waist a shit ton of money that could have been spent on actually useful things.

They did literally nothing and seem to use the default stable diffusion model which is supposed to be a techdemo. Would have been easy to put “(((nude, nudity, naked, sexual, violence, gore)))” as the negative prompt

The problem is that negative prompts can help, but when the training data is so heavily poisoned in one direction, stuff gets through.

Because the Internet is for porn. Always has been, always will be.

Scroll through the trained models on civit.ai and you’ll quickly get a feeling of the dystopian level of “prettifying” everything in the AI-generation world.

I also once searched for “brown” just to see if any models were trained to create non-white-skinned people, and got shocked when the result was filled with models trained on Millie Bobby Brown from Stranger Things. I don’t even want to know what those models are used for.

dystopian level of “prettifying” everything in the AI-generation world.

So like all the ad campaigns, TV shows and movies in the real world?

From the first 10 models I saw, the first image was a woman 9 times…

Because simps.

Saved you a click.

Because white dudes fetishizing asian women wrote the llms and pointed at the training data

I work in tech and asian guys tend to outnumber white guys in it, especially if you combine east asian and south asian.

Are the images above supposed to depict “porn”? I’ve never seen porn like that.

In 2024, the brain washing of people is almost complete.

Sensuality is now porn. :)

Stable Diffusion is little more than content laundering. It cannot create anything more than what you put in.

deleted by creator

deleted by creator

You’re so confidently incorrect about something you clearly don’t know much about.

How is he wrong?

I’m not exposed to a huge amount of media coming out of Asia, outside of a handful of Korean shows that Netflix has picked up and anime. But like, if anime is any indicator, I’m not really surprised that the training data for Asian women is leaning more toward overt sexualization. Even setting aside the whole misogynistic ‘fan service’ thing, I don’t feel like I see as much representation of women who defy traditional gender roles as the last twenty or so years of Western media.

It certainly could be that anime is actually a huge outlier here, but if the training data is primarily from the English speaking web, it might be overrepresented anyway. But like, when it comes to weird AI image behaviors, it pays to think about the probable training data.

Like, stable diffusion seems to do a better job of rendering jewelry if you tell it to surround it with berries. Given the output, this seems to be due to Christmas themed jewelry ads. They also tend to add a lot of bokeh for the same reason.

Garbage in, garbage out 🤷

Absolutely this. The reason AI defaults female into “female armor mode” is the same reason Excel has January February Maruary. Our spicy autocorrect overlords cannot extrapolate data in a direction that it’s training has no knowledge of.

You train on a bunch of reddit crap, you’re going to get neck beard reddit crap out. It’d look different if they only used art history books.

Looking at some of the replied that tried to dismiss the issue and the general lack of concern from moderators against aggressive replies from AI apologists (in this thread but also other AI related threads) are disheartening.

deleted by creator